Local Large Language Models & n8n Workflow Integration: Data Sovereignty and Autonomous Strategic Analysis Architecture

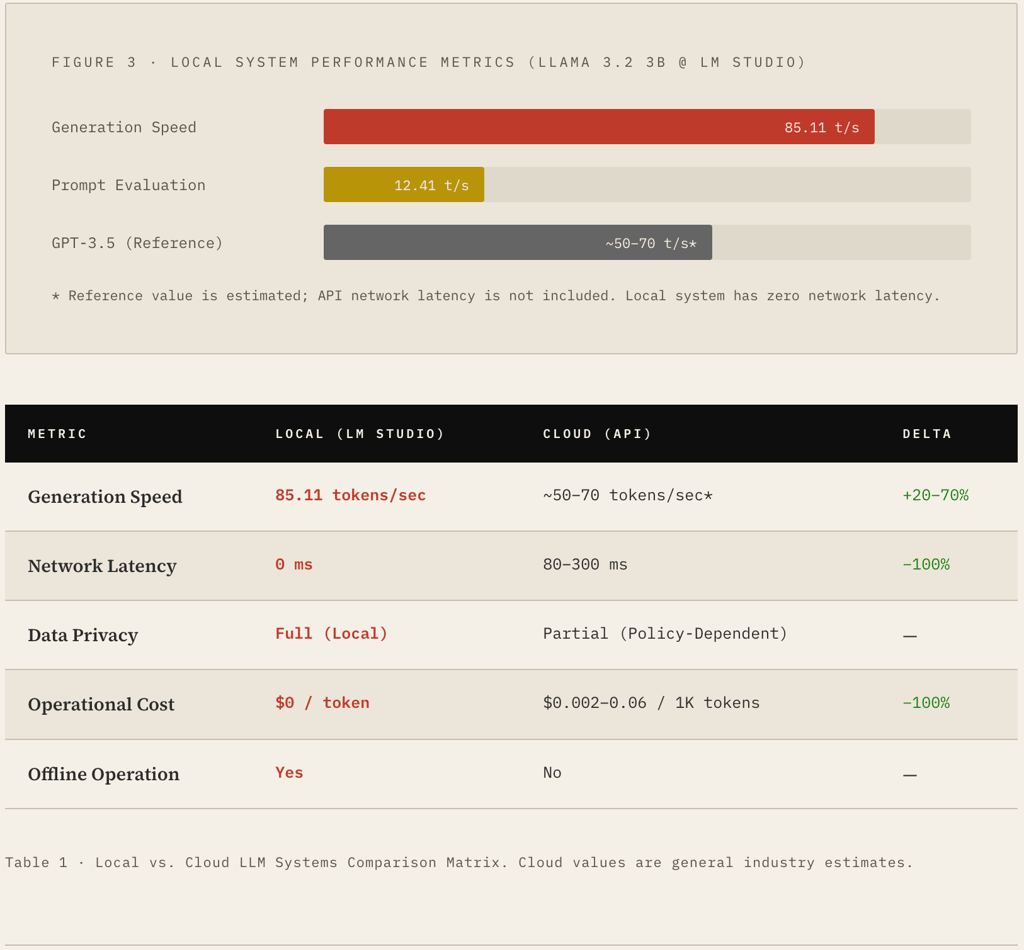

This study examines an autonomous AI workflow architecture developed within the "The Core Method" framework — one that eliminates dependency on external cloud services and preserves data privacy at the hardware level. The scope includes an end-to-end locally encrypted analysis system built using Meta's Llama 3.2 (3B) language model, the LM Studio inference engine, and the n8n orchestration layer. The methodology section details file system access protocols (N8N_BLOCK_FS_WRITE_ACCESS) and API bridging techniques configured via n8n terminal settings. Experimental findings demonstrate that the local system achieves a generation speed of 85.11 tokens/sec, making it competitive with cloud-based counterparts. The architecture ultimately presents a secure and sustainable alternative to traditional SaaS models for processing sensitive data, grounded in the principle of Digital Ownership. Keywords: Local LLM · n8n Automation · Data Privacy · Llama 3.2 · Digital Ownership · Autonomous Agents · LM Studio · API Bridging

3/25/20263 min read

§ 1 — Introduction

Digital Ownership and the Local AI Paradigm

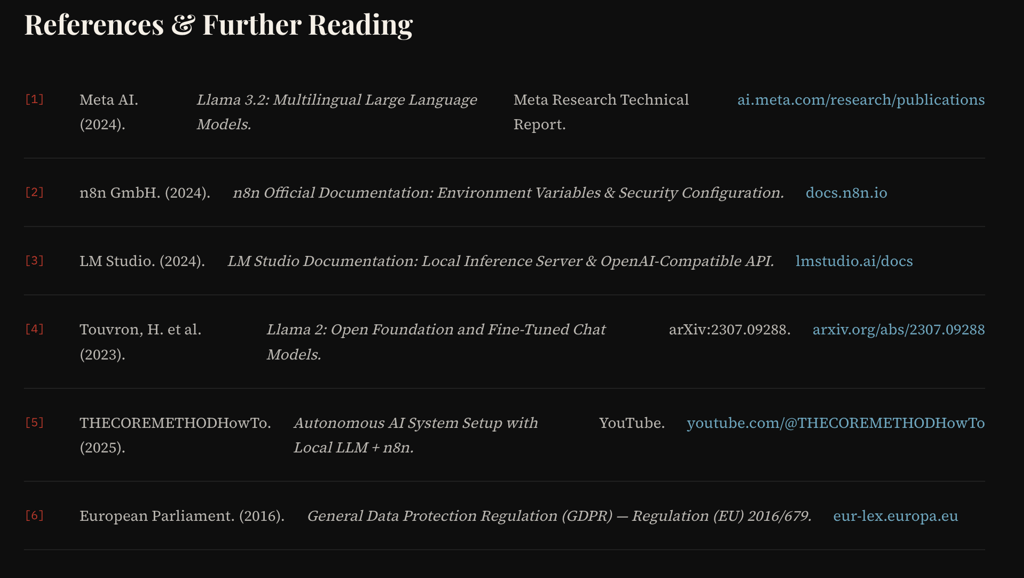

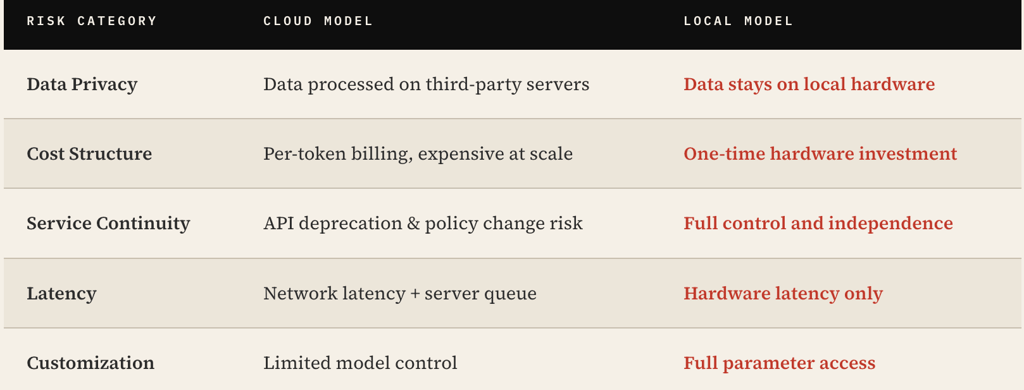

Since 2023, cloud-based large language models (LLMs) offered by companies such as OpenAI, Anthropic, and Google have rapidly proliferated across enterprise and individual use cases. However, this proliferation has introduced serious structural concerns: user data is transmitted to third-party servers, prompt-based information may be incorporated into model training, and service continuity guarantees remain subject to pricing policy changes.

"Data is the oil of the digital age — but if that oil is refined in someone else's facility rather than on your own land, the question of ownership becomes deeply contested."

The Core Method is a philosophy and methodology designed precisely to close this gap. Its core argument is simple: if an AI system can run on the user's local hardware, it achieves structural advantages over cloud alternatives across privacy, cost, and sovereignty dimensions.

This paper serves as a technical reference document that materializes this philosophy, laying out the full pipeline from local LLM deployment → workflow orchestration → secure data processing step by step.

1.1 Structural Risks of Cloud-Based Systems

Current SaaS AI models carry three fundamental vulnerabilities:

§ 2 — Methodology

Autonomous Local System Architecture

The technical infrastructure of The Core Method system consists of three primary layers: the Inference Engine, the Orchestration Layer, and the Data Security Protocol. These layers communicate over the local network, processing data end-to-end without leaking any information to external endpoints.

2.1 Inference Engine: LM Studio + Llama 3.2

Meta's Llama 3.2 (3B parameter) model is loaded onto local hardware via the LM Studio interface. LM Studio exposes the model as an OpenAI-compatible REST API, enabling existing n8n integrations to be redirected to the local server without any code changes.

The Llama 3.2 3B model delivers enterprise-grade text generation on consumer-class GPUs, requiring only ~4GB of VRAM while maintaining high inference throughput.

2.2 Orchestration Layer: n8n API Bridge

n8n is an open-source, self-hostable workflow automation platform. In The Core Method architecture, n8n functions as a local bridge to the LM Studio API. This setup enables:

Triggers (Webhook, Cron, Manual) → send requests to the LLM

LM Studio response → returned to the n8n workflow

Processed data → written to the local file system or database

2.3 Data Security Protocol

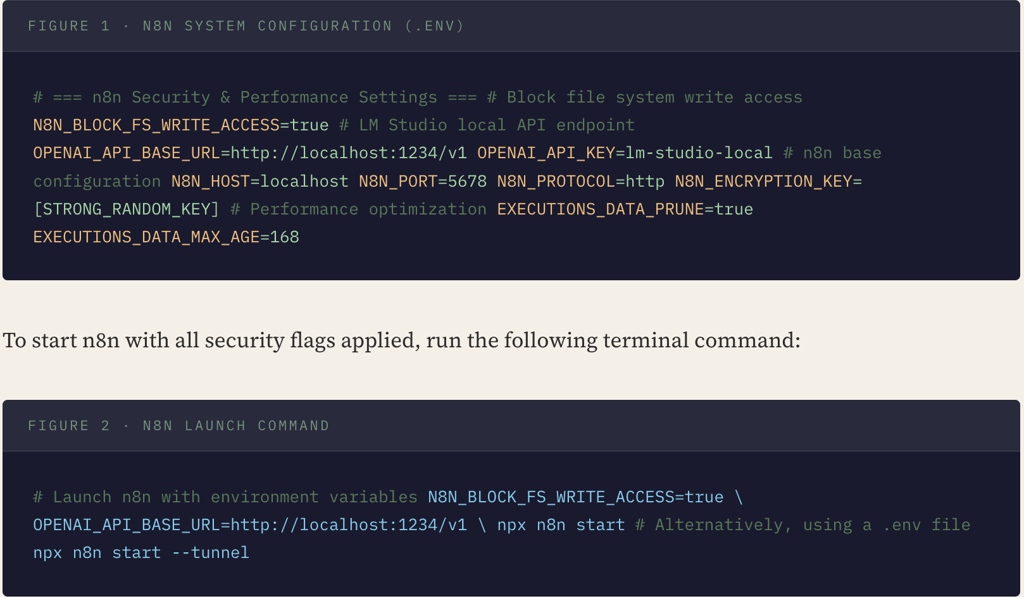

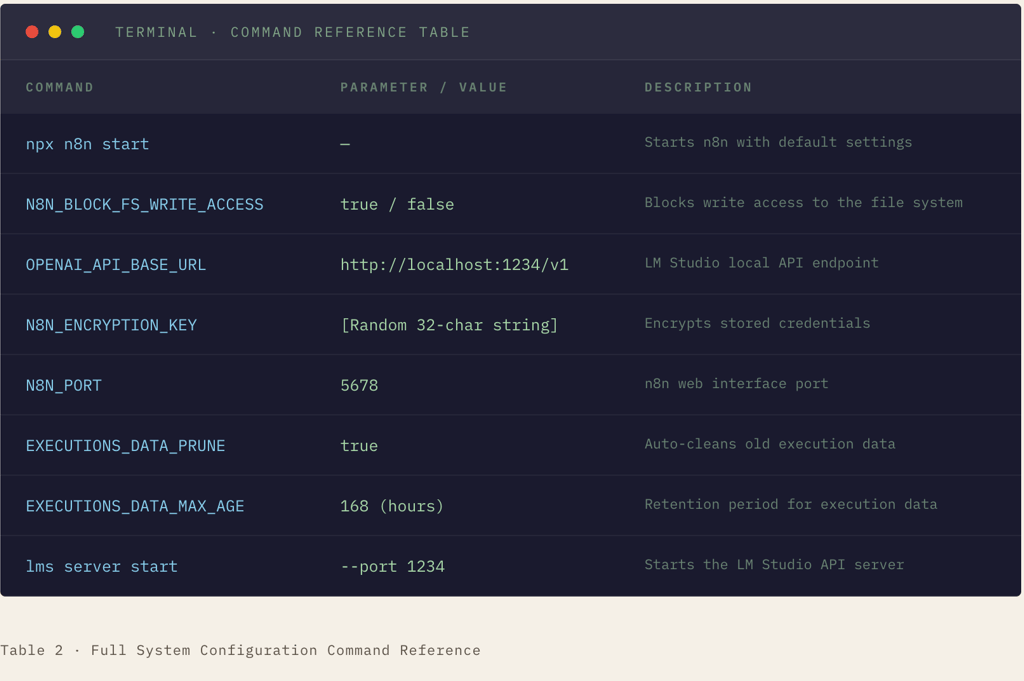

The critical component of system security is n8n's environment variable configuration. In particular, the N8N_BLOCK_FS_WRITE_ACCESS parameter restricts the n8n process's write permissions to the file system at the hardware level.

§ 3 — Experimental Data

Performance Analysis & Benchmarking

Experimental measurements were conducted on Apple Silicon consumer-grade hardware. The data below was obtained through LM Studio's built-in statistics panel and is analyzed in the context of comparable cloud-based inference services.

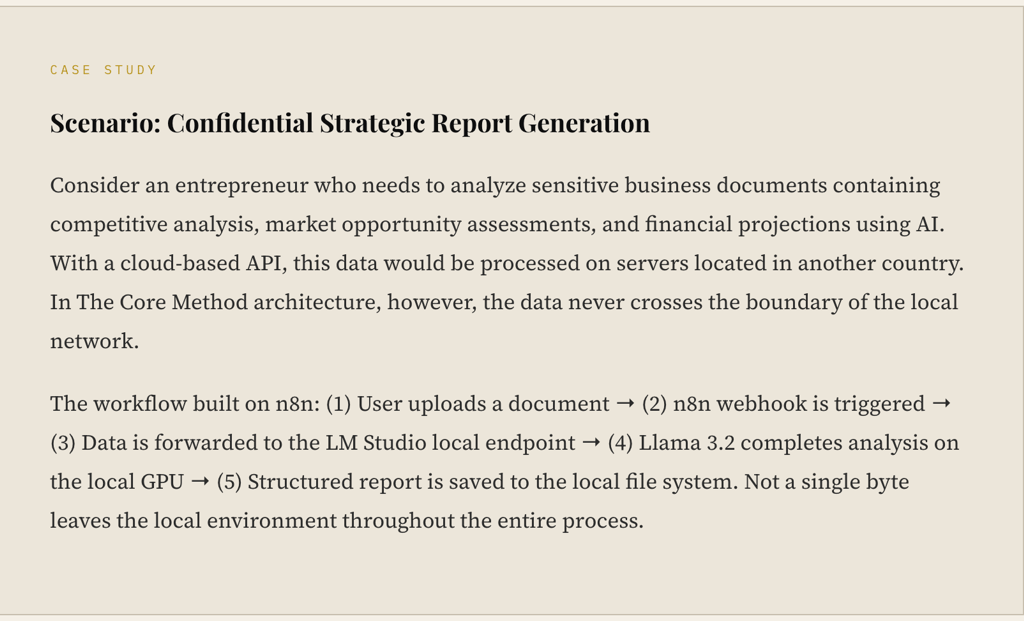

§ 4 — Case Study

Strategic Business Plan Automation

This section examines a real-world application of The Core Method architecture in case study format. The application covers a fully autonomous analysis pipeline in which sensitive business data is processed on a local GPU without requiring an internet connection to generate strategic reports.

4.1 Enterprise Cybersecurity Implications

The significance of this architecture from a corporate security perspective can be summarized under three headings: GDPR & CCPA Compliance — since data processing occurs without any third-party transfer, legal obligations are greatly simplified. Data Breach Risk — network isolation keeps the attack surface to an absolute minimum. Auditability — all log records are maintained locally and never exit the environment.

Appendix A — System Configuration Commands

Installation & Authorization Protocol